In November last year, Chancellor Philip Hammond delivered his Autumn Budget Statement, which included some radical changes to the legislation that governs driverless cars in the UK. AutoMate’s Harrison Boudakin unpacks what these reforms mean for the future.

Perhaps more than any of the 21st century’s other great technological visions, the prospect of the ‘driverless car’ remains both deeply fascinating and the source of a thousand unanswered questions. It has been sold to us – quite vigorously – as the natural ‘next stage’ of the motor car’s evolution, and we have certainly seen a rapid acceleration in the development of the physical technology, which will be required to bring autonomous transportation to life.

Yet the spectrum of unknowns goes far beyond the engineering hurdles we have already conquered. Giving up the controls has implications culturally, legally and psychologically – and these grey areas must be clarified if we are ever to nurse the totally selfdriving car to a safe and profitable fruition.

All of which brings us to the UK. Specifically, it brings us to Chancellor Philip Hammond’s announcement, which cited changes to the legislation which currently governs the development of driverless cars. Broadly-speaking, the new laws he announced will allow prototypes to be tested on public roads without – for the first time – any human operator behind the wheel. Hammond’s proposal comes as part of a package of funding for “future vehicle development”, designed to stimulate the calculus of Britain’s automotive sector towards these “emerging” technologies.

All of which brings us to the UK. Specifically, it brings us to Chancellor Philip Hammond’s announcement, which cited changes to the legislation which currently governs the development of driverless cars. Broadly-speaking, the new laws he announced will allow prototypes to be tested on public roads without – for the first time – any human operator behind the wheel. Hammond’s proposal comes as part of a package of funding for “future vehicle development”, designed to stimulate the calculus of Britain’s automotive sector towards these “emerging” technologies.

In many ways, this proposal does thrust the UK right into the peloton of nations that are currently leading autonomous vehicle development. Compared to a number of other European countries and some US states, Britain’s revised system will offer manufacturers new freedom to test and improve the behaviour of their ‘intelligent vehicles’ in the real world. This comes as a welcome crack of light for the UK car industry, at a time when the unrelenting greyness of the Brexit fallout continues to dampen market confidence.

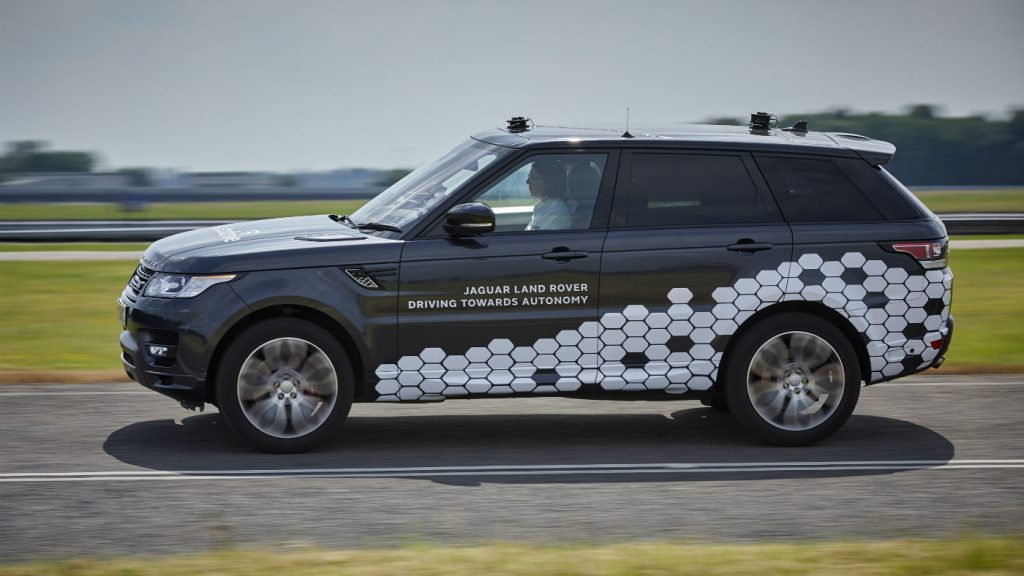

It’s worth noting, as well, that Britain has had something of a head-start when it comes to autonomous cars. Because the UK never ratified the Vienna Convention, there’s never been a law stating that a driver “must be in control of his vehicle at all times.” This means companies like Jaguar-Land Rover already have the freedom to test self-driving cars on the public highways, albeit with a supervising driver present behind the wheel. In fact, their current crop of autonomous prototypes are now being trialled successfully on public roads around Coventry, and are said to be Level 4 capable (in other words, full self-driving, but with human supervision).

But this gives rise to an interesting point. You see, despite the government’s bullish desire to get totally unsupervised Level 5 cars on the road by 2021, the simple problem is that we are yet to figure out how to make one that can co-exist peacefully with humandriven vehicles. Now of course, if we were talking about cars that operated in a ‘closed network’ – a world where every variable is controlled – then that’d be a different story. But we’re not. Instead, engineers face the challenge of designing systems that have to react proactively and creatively to any scenario that might eventuate out on the road. In other words, we need to be designing robots that are as smart as we humans are dumb and mistake-prone. That is a tall order.

At this stage, the only possible path to achieving this is via Artificial Intelligence. Analysts believe that by teaching computers to teach themselves, autonomous cars will eventually be able to develop a humanlike, ‘learn-as-you-go’ ability to improvise their decision-making on the fly. However, not only is this technology relatively nascent, it is by no means foolproof – after all, teaching computers to become more like us has the unfortunate side-effect of making them more prone to the mistakes we make too. This, of course, gives voice to the line of thinking which says ‘autonomous’ technology should be seen as less of a driver replacement, and more as a driver assistant. Many people assume that having a human and a computer working together on the task of driving will make for an extremely safe motoring experience.

At this stage, the only possible path to achieving this is via Artificial Intelligence. Analysts believe that by teaching computers to teach themselves, autonomous cars will eventually be able to develop a humanlike, ‘learn-as-you-go’ ability to improvise their decision-making on the fly. However, not only is this technology relatively nascent, it is by no means foolproof – after all, teaching computers to become more like us has the unfortunate side-effect of making them more prone to the mistakes we make too. This, of course, gives voice to the line of thinking which says ‘autonomous’ technology should be seen as less of a driver replacement, and more as a driver assistant. Many people assume that having a human and a computer working together on the task of driving will make for an extremely safe motoring experience.

Yet as has been proven in the world of aviation, the relationship between man and machine can often be perilously blurry. The problem is that humans are, psychologically speaking, not particularly good at ‘supervising’ technology. In fact, we are patently bad at monitoring automation. Every study shows we are far too quick to over-trust computers, and that our brains are simply not good at maintaining a clear picture of our surroundings, particularly once we start believing a machine has everything under control. This, then, has dangerous implications for semi-autonomous vehicles (Level 2, 3 and 4), because in these cars, the driver still needs to be able to take control, if and when the system trips up and misses something.

So where does that leave us?

Well, look at it this way. One of the foremost thinkers on aviation safety, the late-Dr. Earl Wiener, once said that technology doesn’t eliminate errors, it merely changes the nature of the errors that are made. The fact that this statement applies so clearly to the autonomous car is – I think – evidence of the gulf between our expectations of the technology and the reality we actually face. In of itself, allowing automakers to test unsupervised, Level 5 cars on the road by 2021, is a positive step for the industry – a vote of confidence in them from the government, who right now need to do everything they can to boost the morale of the British manufacturing sector. But even so, we do have to face the reality that Chancellor Hammond’s announcement is, in the end, but one line of working in a very complex piece of calculus, which the industry, the government and the legislators are undoubtedly going to take a long, long time to solve.

So don’t let go of the steering wheel just yet.